We’re convinced: software development has changed fundamentally since November 2025. What was previously considered a senior skill (architecture, code review, speed) still matters. But the daily typing has gone agentic. Senior engineer is being redefined: by current tool maturity, not years of experience. And most engineering teams in Germany haven’t seriously started yet.

That has a consequence no CTO can ignore in 2026: hiring has to happen completely differently. Yesterday’s job ad filters out exactly the experts who have already made the leap to agentic engineer. We see it in every other conversation.

This article is the overview of the entire theme of agentic engineering and hiring 2026: what has changed, why speed has shifted to judgment, what the wrong filter looks like, what should be in the brief instead, and how the pacemaker model works. The existing deep-dives on awareness, diagnosis, sourcing, and selection are linked in the funnel overview at the end; the pacemaker model, governance patterns, and banking will follow over the coming weeks.

Karpathy’s pivot: “Vibe coding is passé”

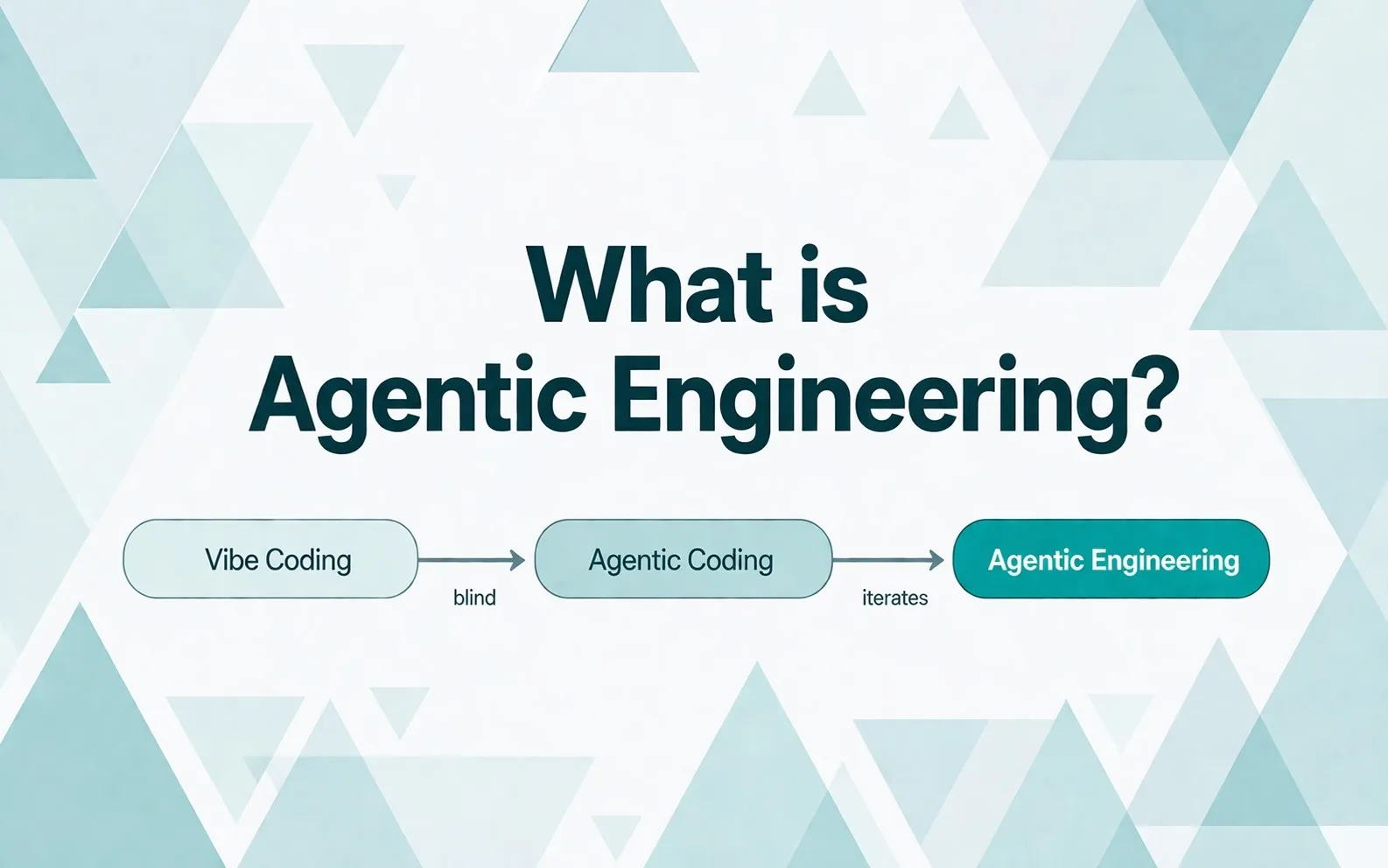

The term agentic engineering has a clear origin. And a predecessor that the same person retired one year later. If you want to understand the new vocabulary of the AI engineering world in 2026, this is where you start.

In February 2026, Andrej Karpathy, formerly Director of AI at Tesla and a co-founder of OpenAI, used Sequoia’s AI Ascent to retire a term he had coined exactly one year earlier. “Vibe coding,” the practice of trusting an AI without code review, was passé. The new default was agentic engineering.

Karpathy’s reasoning was precise:

“Agentic, because you’re no longer writing the code yourself 99 percent of the time, you’re orchestrating agents. Engineering, because there’s art, science, and expertise behind it.”

The difference isn’t semantic. It’s operational. Karpathy himself flipped his own 80% coding / 20% delegating ratio to 20/80 in December 2025. Same person, different operating mode.

How big this leap is historically, Boris Cherny, inventor and lead engineer of Claude Code at Anthropic, phrased in a Renaissance parallel: “Before the printing press in the 15th century, about 10 percent of the European population could read. The printing press was invented, and over the next few hundred years, global literacy rose to 70 percent.” Translated to coding software in 2026: “Software becomes something fully democratized that anyone can do.” If you still believe in 2026 that the shift is comparable to cloud or mobile, you’re thinking too small. The better comparison is the printing press.

In April 2026 it became even clearer: with Claude Opus 4.7 and GPT-5.5, a new maturity level was reached. This is no longer gradual progress. This is a different tier.

If you want to follow the term further, you’ll find at Simon Willison a chapter-by-chapter pattern library starting February 2026, at IBM a conceptual definition, and at Codecentric one of the few German tech-blog articles on the topic. Our own definitional deep-dive with the three-stage distinction between vibe coding, agentic coding, and agentic engineering sits in What is Agentic Engineering.

What has measurably changed since November 2025

The METR study is the most direct before-and-after evidence because it compares the same methodology with partly the same participants over time:

- Early 2025: experienced open-source developers (median 10 years experience) were 19% slower with AI than without. Confidence interval +2% to +39%. Cause: prompt overhead, verification effort, context loss with the tools of the day.

- February 2026: the same participants are now working roughly 18% faster with AI. Confidence interval -38% to +9%.

A swing of 37 percentage points in 12 months, with identical study methodology. METR itself names the adoption of agentic tools as the main cause. Not better prompts. Different workflow.

More interesting still: METR judges its own numbers to be an underestimation of the real effect. The reason: the most productive AI users drop out of the study. 30 to 50 percent of participants deliberately don’t submit tasks because they no longer want to do them without AI.

The cutoff date: agentic engineering becomes enterprise-ready

The maturity leap can be traced back to a few releases. This table is the strongest evidence that “agentic engineering” isn’t a linguistic trend but a technological shift with a clear date.

| Date | Release | What changed operationally |

|---|---|---|

| November 24, 2025 | Claude Opus 4.5 | Multi-step tool use became reliable, the cutoff date |

| December 11–18, 2025 | GPT-5.2-Codex | Comparable maturity at OpenAI |

| December 18, 2025 | Agent Skills | Reusable context packages, team knowledge became modular |

| February 5, 2026 | Claude Opus 4.6 | Agent Teams out-of-the-box, coordinated multi-agent execution |

| March 5, 2026 | GPT-5.4 | Jump on OSWorld (desktop automation) from 64% to 75% |

| April 17, 2026 | Claude Opus 4.7 | SWE-bench Verified from 80.8% to 87.6%, first time over the 80 percent mark |

| April 22, 2026 | GPT-5.5 | Terminal-Bench 2.0 at 82.7% state-of-the-art |

| April 28, 2026 | Claude Code v2.0 | Rust control plane, operator workflows, desktop dashboard for enterprise |

Anyone with productive agentic engineering practice today has built it, by necessity, between November 2025 and April 2026. No earlier window exists.

Greg Brockman, President of OpenAI, has confirmed the same leap from the supplier perspective: “In the course of December alone, we jumped from these agentic coding tools writing 20 percent of the code to 80 percent. Which means they’re going from a side activity to the central thing you do all day.” A factor of 4 in one month. That’s the maturity acceleration behind the release dates in the table above.

If you want to know what the upper end looks like: Cherny reported at Sequoia’s AI Ascent 2026 that he merged 150 pull requests in a single day. His setup: 5 to 10 parallel sessions, several hundred agents at once, plus dozens of cron-triggered loops running overnight. Agents that monitor CI health, auto-rebase PRs, cluster Twitter feedback. “Loops are the future,” Cherny says. That’s exactly the practice leap Anthropic experienced internally between October and November 2025, almost overlapping with the METR finding.

Cherny puts it even more sharply: “For me, coding is solved.” With the qualifier: “For me. Not everywhere: there are big complicated codebases, weird languages the model doesn’t yet handle.” That honest disclaimer is just as important as the claim. What works at Anthropic in a 100% TypeScript/React codebase isn’t the reality at every DAX-listed bank. But it’s the maturity peak that shows what’s possible.

Anthropic as proof-of-practice: 74 releases in 52 days

If anyone has doubts about whether this is enterprise-ready, look at Anthropic itself. Between February 1 and March 24, 2026, Anthropic shipped 74 product releases across all product lines.

That’s 1.4 releases per day. In parallel.

The list of release highlights would fill several quarters at most enterprise software companies: Claude Opus 4.6, Sonnet 4.6 with a 1M-token context window, free memory for all users, Excel and PowerPoint integration, Code Review, Code Security, Computer Use, Voice Mode, Channels for Telegram and Discord.

The decisive point for any CTO:

Anthropic builds Claude Code with Claude Code. Roughly 80% of technical staff use it daily. 90% of the code in Claude Code is written by Claude Code itself.

A team that ships like this isn’t bigger. It’s leveraged differently: senior people. Sharp judgment. High AI tool deployment. No middle layer slowing down the tempo.

What this means in Germany

In Germany, around 149,000 IT positions are unfilled as of May 2026, 12,000 more than a year earlier. 70% of companies report a shortage of IT specialists (Bitkom Study Report 2026).

At the same time, only 21% of large companies with 250+ employees are actively deploying AI against the talent shortage (Bitkom press release February 2026). Among midsize firms with 50–249 employees, it’s 12%. Among smaller ones, 7%. Among micro-businesses under 10 employees: 2%.

42% of companies expect new IT roles to emerge through AI. But there’s a wide gap between “expect” and “have hired someone who works that way” — and that gap is what this article is about.

Speed has become commodity. Judgment is the new bottleneck.

As long as “writing code” was scarce and expensive, speed was the lever. Type quickly, review code quickly, debug quickly. Whoever was fast was valuable.

What happens when output gets cheap? The bottleneck moves. To the decision about what should be built in the first place. To the question of what “good” means in a specific business context. To the ability to tell a “nearly right” suggestion from a useful one.

Sequoia framed this shift precisely in an essay in April 2026. Julien Bek, an investor at Sequoia Capital focused on AI infrastructure, distinguishes intelligence (applying rules, translating specs into code, testing, debugging) from judgment (which feature when, which architectural debt to take, when to ship). AI has crossed the intelligence threshold. Judgment stays human.

Concretely:

Every AI improvement makes the tool cheaper and judgment more valuable.

In April 2026, Karpathy sharpened the spread: “People used to talk about the 10x engineer. I think this is amplifying significantly. 10x is not the speedup you get. The people who are really good at this go much higher than 10x.” Plainly: the strong senior who works agentically is extremely leveraged. The weak senior with the same tools may even slow down because they produce output that nobody can review. Talent density in the agentic engineering area in 2026 isn’t a gradual lever. It’s exponential.

Brockman put this even more concretely in early May 2026. His point: when doing things gets cheap, scarcity moves to human attention. “Doing things is now easy. The question ‘Is this good? Is this what I wanted? Does it match my values?’ becomes the most important bottleneck of all.” He illustrates it with an anecdote: his Codex agent pinged a Slack colleague with a question, then pinged the colleague’s manager two minutes later. “This is taking too long, I escalated.” Technically correct, socially off. That’s exactly the gap where it gets decided whether an engineering team is agentically productive or agentically chaotic.

When a weak operator produces 500 lines of plausible-looking but useless code in an hour with AI: what does that cost a team? Not the hour. The days a senior spends cleaning it up. The weeks an architectural mistake travels through the codebase.

AI makes bad hiring more expensive, not cheaper. That’s the real argument of 2026.

The market data confirms it

77% of business leaders say in 2026 that AI is increasing, not reducing, their need for specialized fractional talent. Gartner reports the same year that headcount growth in engineering organizations has dropped from 6% to 2% while tech budgets are growing in double digits. The money is flowing from people to compute.

Tomasz Tunguz, general partner at Theory Ventures, proposed in early 2026 that tokens are now the fourth component of engineering compensation: salary, bonus, equity, inference compute. For senior engineers, token costs already account for over 20% of fully loaded cost.

OpenAI Codex’s engineering lead has reported that candidates in interviews now ask how much dedicated inference compute they will get. Not the salary. Not the equity package. Compute.

But compute without judgment is just a bigger bill. Tokens pay for execution. Someone has to decide what should be built, when to ship, what tradeoffs are acceptable. That takes experience. Senior people who have already made decisions like that.

For more on this shift: we worked through the economic consequence for engineering org design in our token-KPI deep-dive.

Hiring: the wrong filter

What happens when a CTO starts looking in May 2026?

The most common job ad reads roughly: “We’re looking for a senior engineer with multiple years of experience in enterprise AI implementation, established agent toolchain, and a track record in regulated environments.”

Sounds reasonable. It isn’t.

“Enterprise agentic experience” as a hiring criterion logically doesn’t work in 2026. The methodology has been productively mature since November 2025. That’s six months. In those six months, no regulated company in Germany has pulled off a serious agentic engineering rollout, because procurement, legal, data protection, and architecture think in quarters, not weeks.

Asking for years means looking for a person who can’t exist. The candidates who present themselves as “enterprise agentic veterans” almost always have a gap between the claim and the actual tool setup. We test for this in every discovery call and find it again and again.

What this systematically filters out: exactly the senior practitioners who, between November 2025 and April 2026, worked on current tools (not in two-year-old legacy stacks), get filtered out of the funnel by “enterprise experience.”

Where to find the right senior profiles instead, and which four behavioral anchors belong in the brief, lives in Find a Senior Agentic Engineer 2026.

What should be in the brief instead

Four behavioral anchors that say more in 2026 than years of experience:

1. Current workflow over years of experience. Has the candidate worked between November 2025 and today with three to five parallel agents in worktrees? Do they write specs before code? Do they use plan mode? Do they run review agents over the implementation?

2. Their own skills in the repo. Modular, reusable context packages the candidate wrote themselves. Not downloaded. Not copied from a tutorial.

3. Subscription tier. “What tier do you run on Claude Code?” is a diagnostic question in 2026. If they’re on Pro at $20 a month, they aren’t using the tools seriously. Senior practitioners run Max 5x or Max 20x, or direct API. Anything less is hobbyist level.

4. Honest failure handling. “Tell me about a moment when the agent built complete nonsense.” If they only have success stories, they either have little real practice or no self-reflection. Both are disqualifying.

In April 2026, Karpathy said what this article argues at its core: “Most people haven’t yet rebuilt their hiring process for agentic engineering capability. If you’re handing out puzzle questions, you’re still in the old paradigm.” That’s exactly the gap we address with our 45-minute interview format. Karpathy describes it; we deliver the operational step toward the solution.

How these four anchors get checked concretely in a 45-minute interview, we work out in a dedicated format with 25 concrete questions. Coming soon as a deep-dive article.

Senior freelancer with domain plus track record

Domain depth isn’t a differentiator anymore in 2026. It’s table stakes.

By domain we mean concrete stack and industry depth: the senior who has built Java Spring Boot backends in insurance IT for ten years. The iOS veteran who knows how SwiftUI actually runs in a codebase with Objective-C legacy. The full-stack engineer with React and Node experience in high-traffic e-commerce. The Next.js and TypeScript practitioner who debugged SSR caching on Vercel and Cloudflare until it stuck. That’s domain.

The real USP of a senior freelancer is domain plus a verifiable agentic engineering track record: current tool setup, their own skills, parallel agents, spec-driven workflow, token awareness. Paired with the stack depth above, that creates the magic a classic senior alone can’t produce. Anyone bringing that can change how an engineering team operates in weeks.

What sets these senior profiles apart from classic consultants: they ship like every other engineer. But at the tempo and with the workflows the team is still building out. The team calibrates against the visible example.

A market observation: 61% of freelancers actively use generative AI in their workflows; among full-time employees it’s 40% (DemandSage 2026). External people have structural pressure to stay current. The next client asks what they can do. Full-time employees can freeze in a stack that emerged two years ago.

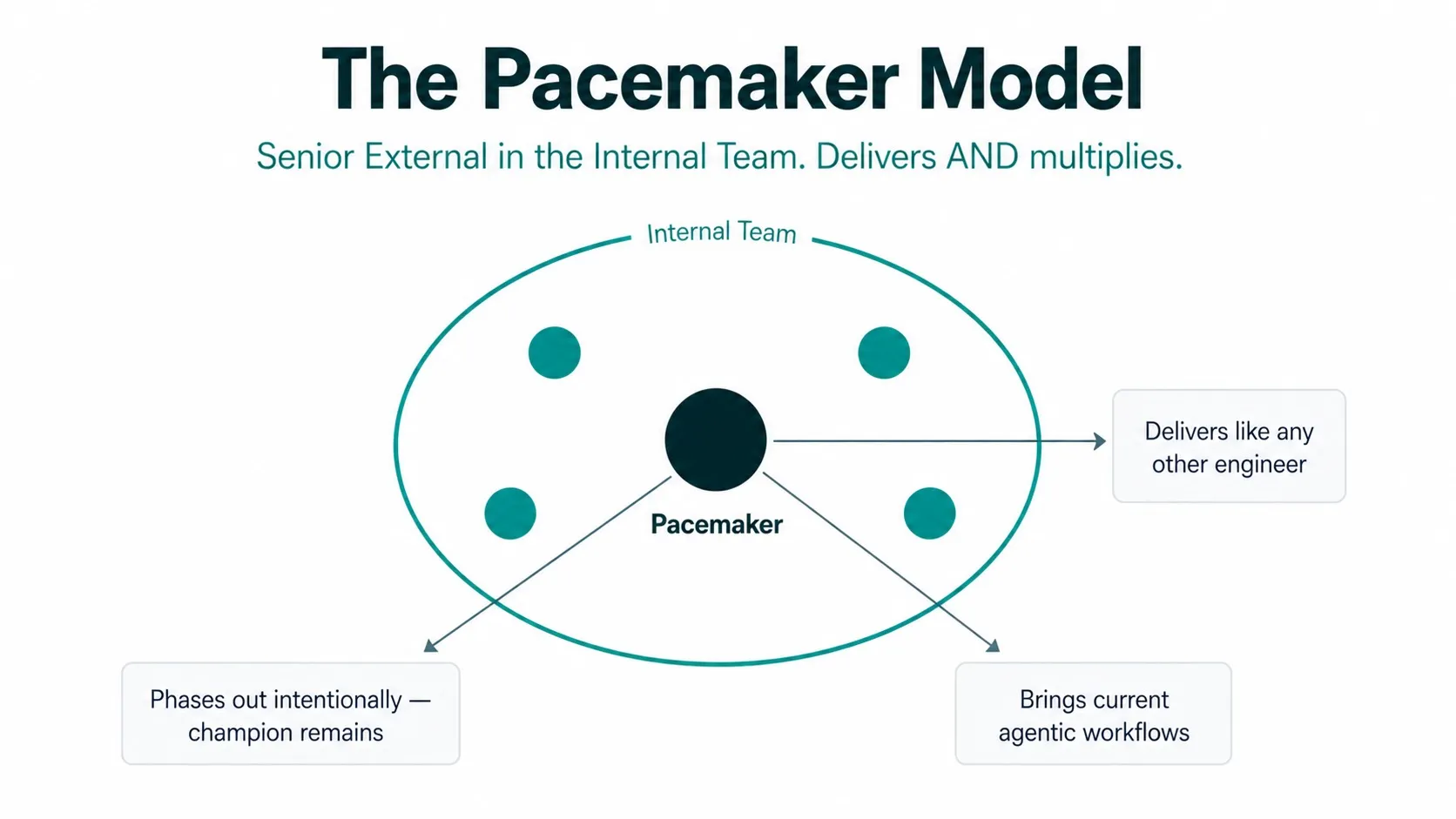

The pacemaker model

Engineering teams in enterprise environments by definition rarely get to work with current tools. Procurement, legal, and security clearances take quarters, not weeks. What’s missing is the counter-movement from the outside. Someone who already knows the next stage and against whom the team can recalibrate.

The solution lies in the shared understanding senior engineers have about architecture and best practices, paired with current agentic workflows adapted so they remain practical for enterprise compliance and business. But for that, someone has to be in the room who knows what’s at the cutting edge.

The ElevateX pacemaker model: an experienced external senior engineer with current agentic engineering maturity sits as a regular team member in the middle of the team and from there sets the rhythm at which agentic work happens.

A pacemaker doesn’t raise the tempo for tempo’s sake. It establishes the rhythm in which the team can work agentically. Speed is the consequence, not the goal.

Three mechanics distinguish the model from classic consulting or classic interim reinforcement:

- Delivers AND multiplies. The pacemaker is not coach on the side. They are primarily an engineer with a delivery commitment. Knowledge transfer happens in the daily work, not in workshops.

- Sits in the middle, not on the outside. Regular team member, regular standup, regular PR review. No separate reporting line. No consultant role.

- Disappears as planned. When the pacemaker leaves, the internal AI champion remains: the person on the team who is respected and who carries the workflows forward.

The model isn’t an invention. Interim management has worked with the same mechanic for 30 years. What’s different in 2026: the knowledge gap doesn’t sit between junior and senior, but between “before the agentic leap” and “after the agentic leap.” The external person brings the tool setup, the practices, the workflows. None of these are available off the shelf — only in the doing.

A deep-dive is coming soon: The Pacemaker Model for Engineering Teams.

Boris Cherny described from his own Anthropic reality where things are heading: “Everyone on the Claude Code team codes. Our engineering manager, our product manager, our designers, our data scientist, our finance person, our user researchers — every single person on the team writes code.” When everyone codes, the classic engineering org structure breaks open. What remains are senior generalists who combine engineering, product, and domain in one person. And those are exactly who become bottleneck hires in 2026.

Where the limits are

The same market that produced the METR data also produces findings that warrant caution. Three of them belong in any honest hiring brief in 2026.

PR size and bug count rise without process adjustment. The Faros AI paradox report (10,000 developers, 1,255 teams, June 2025) shows: AI users write more code and parallelize more. But PR size grows by up to 150%, bug count by 9%, DORA metrics stay flat. The bottleneck shifts from coding to review.

AI code ages faster. GitClear documented in 2025 a 41% higher churn rate for AI-generated code. Experienced developers produce around 10% more lasting code with AI. The real gain is significantly smaller than the perceived gain.

Security isn’t on autopilot. BaxBench (ETH Zürich / UC Berkeley, 2026): 62% of AI-generated backend solutions are flawed or contain security vulnerabilities. Even the best model produced only 56% safe and correct solutions without specific security prompting.

“Savings” aren’t automatically real. Bain & Company described in September 2025 the real-world savings from AI coding tools in an enterprise survey as “unremarkable.”

That’s the other side of the METR story. The productivity gains are real, with clear scope, adapted processes, and experienced users. Without process adjustment, they evaporate in review bottlenecks.

That’s exactly why senior engineering experience that masters these workflows is needed on the team. The five workflow patterns from regulated practice we’ll dig into in a dedicated upcoming article (Governance Emerges in the Workflow); the banking transfer follows separately as Agentic Engineering in Banking 2026.

Overview of the deep-dives

For anyone wondering where they stand in their own hiring process, here are six stages with the matching deep-dives:

| Stage | Question | Deep-dive |

|---|---|---|

| 1. Awareness | What is agentic engineering, really? | What is Agentic Engineering |

| 2. Diagnosis | Where does our team stand, and what are we spending on tokens? | Token Spend as an Engineering KPI |

| 3. Sourcing | Who are we really looking for, and where do we find them? | Find a Senior Agentic Engineer 2026 |

| 4. Selection | How do we test agentic maturity concretely? | 45-Minute Format with 21 Questions |

| 5. Integration | How do we extract impact from this? | Pacemaker Model · Governance Patterns (coming soon) |

| 6. Scaling | How does this work in regulated industries? | Agentic Engineering in Banking (coming soon) |

Why now

Bitkom described the gap: 149,000 unfilled IT positions in Germany as of May 2026. 70% of companies report a shortage. But only one in five large companies is actively deploying AI against it.

At the same time, the maturity threshold shifted again in April 2026. The tools are there. The methodology is mature. What’s missing are the people who know how to produce value with them.

The gap doesn’t close through more hiring. It closes through different hiring. Senior engineering capacity that operates agentically doesn’t replace one, two, or three classic engineers. It changes what becomes possible in the same team.

Anyone still searching in May 2026 for “enterprise agentic experience with five years’ track record” is waiting for a person who doesn’t exist. Anyone who instead brings a senior with current tool maturity into the team (not as a consultant, but as an engineer who delivers in the middle) changes the tempo of the entire team in weeks, not quarters.

In an early-May 2026 hyperagent talk, Howie Liu, founder of Airtable, condensed it into an analogy that’s central for hiring decisions: “Imagine Albert Einstein — generally highly intelligent. But he doesn’t know anything about real estate. Give him the right brief — a playbook, a manual — and he goes and solves it.” Translated to hiring in 2026 that means: the models are Einstein. What’s scarce are senior engineers who can feed the models with the right context. Domain knowledge plus current tool maturity are the levers, not years of experience with tools that didn’t exist six months ago.

That’s the pacemaker model. And that’s the hiring question that really counts in 2026.

If you’re in a similar phase

I respond personally. Drop me a quick note on LinkedIn, wherever you’re at: what your engineering maturity looks like, where the urgent need is, what hasn’t worked so far. No pitch, no form. An honest read on whether and how we can help.

Or send a concrete request to our team — we get back within 48 hours with a first proposal.

![Agentic Engineering Hiring Interview 2026 [+21-Question PDF]](/_astro/agentic-engineering-interview-featured-de.BlvVAp5j_Z9ib7o.webp)