We’re convinced: agentic engineering is not a new buzzword. It describes a different job. The senior engineer who was typing code in an editor through November 2025 has, since early 2026, been orchestrating agents. Andrej Karpathy calls it the successor to vibe coding. We see it in nearly every conversation we have with senior freelancers in 2026.

In practice that means: anyone working agentically writes code by hand only 5 to 15 percent of the time. The rest is specs, tests, plan modes, and multi-agent workflows.

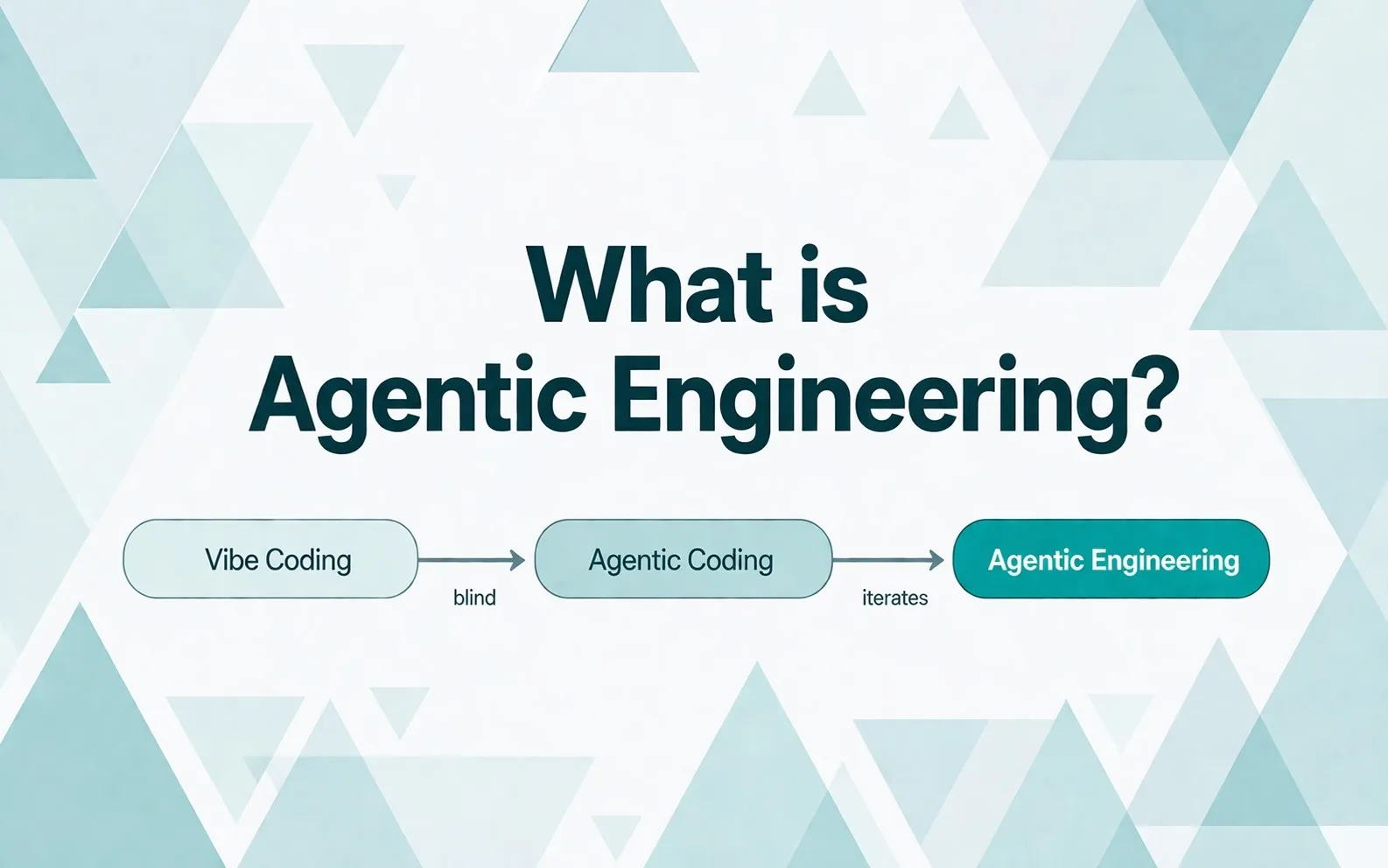

This article is the definitional deep-dive in our cluster on Agentic Engineering and Hiring 2026. It clears up three things: how the term came about, how it differs from vibe coding and agentic coding, and what it means for hiring in 2026.

A year ago to the day, the same person coined a different term. Now he’s retiring it himself.

On February 2, 2025, Andrej Karpathy, formerly Director of AI at Tesla and a co-founder of OpenAI, posted a tweet on X that hit 4.5 million views within weeks. His definition of “vibe coding” was as radical as the name:

“There’s a new kind of coding I call vibe coding, where you fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

The longer thread fleshed out what that looks like day-to-day:

“I barely touch the keyboard. I just always ‘Accept All’, I don’t read the diffs anymore. When I get error messages, I just copy paste them in with no comment, usually that fixes it. The code grows beyond my usual comprehension, but it’s not really coding. I see stuff, say stuff, run stuff, copy paste stuff, and it mostly just works.”

In April 2026 he retired the term.

Karpathy’s pivot

At Sequoia’s AI Ascent 2026 he delivered one line that captures the entire shift in two seconds:

“Vibe coding raised the floor. Agentic engineering raises the ceiling.”

Hobbyists and prototype builders got a new floor through vibe coding. Code that wouldn’t have been written before is being written now. But for professional software with quality, security, and maintainability requirements, that isn’t enough. Agentic engineering raises the ceiling: not by coding without review, but by orchestrating with review.

What does it mean to be a person who has shifted from 80 percent self-coding to 20 percent? Karpathy describes himself in exactly those terms. In December 2025 the ratio flipped. It’s not a more efficient Karpathy. It’s a different job. His reasoning for the wording is precise:

“Agentic, because you’re no longer writing the code yourself 99 percent of the time, you’re orchestrating agents. Engineering, because there’s art, science, and expertise behind it.”

And the decisive contrast with vibe coding in a single sentence that fits on a conference slide:

“You can outsource thinking. You can’t outsource understanding.”

The full talk is worth watching if you want more context on the terminology pivot:

Why “engineering,” not “coding 2.0”

Karpathy places the pivot inside a longer arc. Software 1.0 was explicit logic: the human writes code, the computer executes. Software 2.0 was machine learning. The human curates data sets, the neural net learns the rules. Software 3.0 is agentic engineering: “your programming is becoming prompting, and what’s in the context window is your leverage over the interpreter that is the LLM.” The act of programming is no longer writing code. It’s building the right context for a model that does the computation itself. Hence the word engineering: this is about architecture, constraints, verification, and quality assurance. Not typing.

Three operational criteria

What separates agentic engineering from vibe coding isn’t words. It’s three operational properties. People who do all three are working agentically. People who do one or two are on the way, but not there yet.

Multi-step tool use with self-correction. The agent isn’t running a single prompt-and-answer round. It makes 20 to 200 tool calls in a run without anyone intervening between steps. Writes code, runs tests, reads the output, corrects, iterates further until a success or stop criterion is met.

Long task horizons. An agentic task runs for minutes to hours, not seconds. The human defines outcome and guardrails; from there the agent works autonomously. An API migration across hundreds of files. A module refactor. A bug repro with a fix proposal.

Parallelization, in space and time. The senior practitioner runs three to five agents at once, on separate branches or in multiple instances of the same codebase. While one agent runs a migration, the second writes tests, the third reviews a PR. Cherny adds a second axis: loops over time. Agents that run on cron every few minutes or hours: one watches CI health, one updates dependencies, one collects feedback. Working both axes is what looks senior in 2026.

Anyone not doing this is doing classic AI-supported coding. The AI helps with typing, the human stays in the driver’s seat at every step. That’s also fine. But it’s a different league.

What it looks like in practice

Four people are worth watching.

Karpathy has documented his own transition publicly. What now defines his day isn’t typing, it’s navigation. He describes LLMs as “jagged ghosts”: ghosts with jagged capabilities. His current example for the jagged-intelligence nature (April 2026): “State-of-the-art Opus 4.7 simultaneously refactors a 100,000-line codebase or finds zero-day vulnerabilities, and still tells me to walk to a car wash 50 meters away on foot.” The same model that rebuilds codebases and finds security holes in open source tells you to walk to the car wash. That’s the operational reality. People who don’t recognize and verify it get plausible-sounding nonsense. That’s the consequence of the jagged-intelligence nature: on some tasks the models fly, on others they struggle. The engineer recognizes day-to-day which agent is good for which task and in what order to deploy them.

Peter Steinberger, longtime iOS developer and former founder of PSPDFKit, has a different style. He moved to Codex CLI fully in early 2026 and typically runs three to eight instances in parallel in a 3x3 terminal grid, most of them in the same folder, without git worktrees. He tried worktrees and gave them up again because they slowed him down. Instead, he picks his work areas so the agents don’t trip over each other. His AGENTS.md file is around 800 lines long and grows through what he calls “organizational scar tissue”: every time something goes wrong, a brief note gets added. That’s the voice of a practitioner, not a theorist.

For an 18-minute look at how this plays out in practice: Steinberger gave a TED talk in April 2026 telling the story of his AI agent OpenClaw, including the Morocco anecdote that shows what multi-step autonomy means in concrete terms. He sends his bot a voice message even though he never programmed voice support. Nine seconds later, the agent had inspected the audio file, identified the format, found an OpenAI key, and replied. That isn’t theory. That’s the operational reality we’re describing here.

Simon Willison, co-creator of Django and one of the best-known indie voices on Python and LLM engineering, documents the patterns that other people only apply intuitively. Since February 2026 he’s been writing a pattern library called Agentic Engineering Patterns, 12 chapters as of writing, with one or two added every week. Three examples of what’s in there: “Code is now cheap” means the cost of code itself has dropped, so write more exploratory and disposable code. “Red/green TDD” means test-first helps agents write more precise code with less additional prompting. “Collect what you can do” means reusable skill files as a team knowledge store.

Boris Cherny, inventor and lead engineer of Claude Code at Anthropic, is the practice peak that shows how far the spectrum reaches. At Sequoia’s AI Ascent 2026 he openly shared that he has Claude write 100 percent of his code. On a peak day he merged 150 pull requests. His setup is different from Steinberger’s parallel instances or Willison’s pattern discipline: Cherny additionally runs loops over time. Agents that run on cron every few minutes or hours: one babysits PRs and fixes CI, one keeps the test suite healthy, one clusters Twitter feedback every 30 minutes. “Loops are the future,” Cherny says. So parallelization isn’t just a spatial axis (multiple agents at the same time) anymore. It’s also a temporal axis.

Four styles, one shared reality: they each write code by hand five to fifteen percent of the time. The rest they orchestrate.

Karpathy puts the scale even more bluntly: “People used to talk about the 10x engineer. I think this is amplifying significantly. 10x is not the speedup you get. The people who are really good at this go much higher than 10x.” What Cherny shows with 150 PRs a day, Steinberger with 3-8 parallel instances, and Willison’s pattern library is no abstraction. It’s the upper half of a distribution that is currently spreading out dramatically.

Vibe, coding, engineering: three stages, not synonyms

A difficulty in the current discussion: the terms get used as synonyms. They aren’t. IBM published a topic page in April 2026 that breaks the spectrum down cleanly. It helps because it sets out a clear gradient.

| Term | Who steers? | How much review? | Useful for |

|---|---|---|---|

| Vibe coding | Human accepts whatever the agent produces blindly | No review. “Accept All”. | Prototyping, hobby projects, throwaway code |

| Agentic coding | Human orchestrates agents with defined roles | Review between steps, iteration | Mid-sized work, clearly bounded tasks |

| Agentic engineering | System of multiple agents with tests, observability, human architectural ownership | Multi-layered: AI reviews AI, human reviews final output | Production-grade software, regulated environments |

The three modes don’t exclude each other. Teams often start with vibe coding for fast prototyping, move to agentic coding in the middle phase, and arrive at agentic engineering once the stakes (security, maintainability, compliance) rise.

In a professional context, especially in regulated industries like finance or insurance, agentic engineering is the only viable mode. Vibe coding there is not a model. It’s a risk factor.

”Agentic coding” is fading from the vocabulary

A small observation on the side, but important for anyone writing or speaking on the topic: “agentic coding”, the middle stage, is on the way out in industry usage in 2026.

Karpathy’s pivot accelerated the shift, but it was already underway. IBM, Glide, Software Mansion, and the entire Bloomberg-Stratechery-Pragmatic Engineer cluster now use agentic engineering as the standard term. Codecentric, one of the few German tech blogs that picked up the topic at all, uses the same vocabulary. The New Stack pushed the terminology change into the mainstream in April 2026 with the article “Vibe coding is passé.”

Anyone still saying agentic coding in 2026 is signaling that their vocabulary hasn’t been updated since spring. Fine for internal discussions. A weak position for job ads, pitches, and external communication.

What this means for hiring

If the term agentic engineering has only been established since April 2026, and the methodology has only been productively mature since November 2025, then no one has five years of agentic-engineering experience. Period.

What does exist are senior engineers who, between November 2025 and today, have worked with the current tools: Claude Code, Codex CLI, plan mode, multi-instance setups, their own skill files, spec-driven workflows. Six months of intensive practice is the maximum that’s physically possible in 2026.

Anyone asking for longer is looking for a person who doesn’t exist. Where the right senior profiles actually sit lives in Find a Senior Agentic Engineer 2026. Concrete questions for a 45-minute interview live in the interview guide with 21 questions.

If you’re working through this question right now

A lot of CTOs are wrestling with the same theme: “We’ve cleared Claude Code for the team, but they’re not picking up speed.” The tools are there. The methodology isn’t.

We place senior freelancers who bring that methodology with them. Not consultants, not workshop providers. Engineers who deliver in the middle of the team and build out the internal AI champion on the side.

Drop me a quick message on LinkedIn, wherever you’re at right now. Or send a concrete request to our team.

![Agentic Engineering Hiring Interview 2026 [+21-Question PDF]](/_astro/agentic-engineering-interview-featured-de.BlvVAp5j_Z9ib7o.webp)