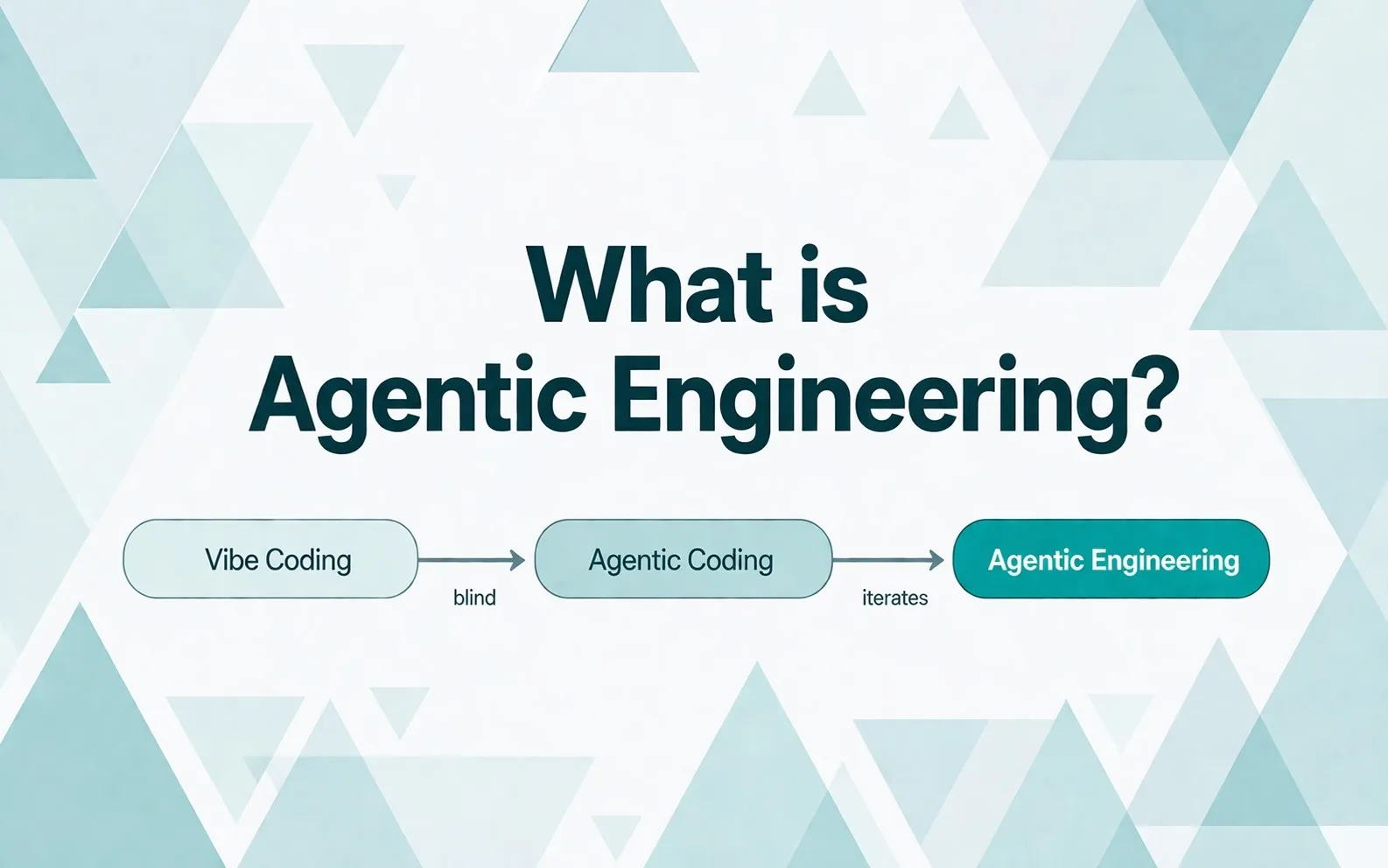

Job ads for agentic engineers work differently in 2026 than the classic “three years of experience with tech stack X.” You can’t write a hiring brief that demands years of experience with AI agents, let alone in an enterprise context. It can’t be fulfilled in practice, because agentic engineering has only been productively running in the broader market since late 2025. Anyone who has read What is Agentic Engineering and Token Spend as an Engineering KPI knows the background.

What replaces the classic filter isn’t a new skill checklist, but a different question: how fast does this person adapt? Adaptability doesn’t show up on a CV. It shows up in the conversation, in the setup demo, and in the side projects of the last 90 days. Anyone who tests for that finds the right senior engineers. Anyone filtering on track-record phrases sorts them out.

This article is the sourcing deep-dive in our cluster on Agentic Engineering and Hiring 2026. It shows which four behavioral anchors replace CV phrases in the brief, in which five channels you actually find the right senior profiles, and why hybrid teams of internal and external profiles ship AI initiatives to production twice as often in 2026.

Why the filter doesn’t structurally fit

How far the practice has shifted in twelve months is shown by a CodeSignal survey of 450 US software engineers in March 2026: 91 percent use agentic AI tools in their daily work, 75 percent have shipped production code in the last six months that was partially or primarily AI-generated. Three out of four engineers operate productively agentic. Hardly any of them has the three-year track record that classic briefs demand.

Three structural effects sharpen the problem.

First, tool gating in German corporates. A YouGov survey 2025 shows: 77 percent of STEM professionals in Germany use AI tools like ChatGPT, Gemini, or Claude without their own IT’s approval. Just under a quarter daily. 29 percent say they’re learning things their employer doesn’t even offer. Tool maturity in corporates therefore often grew parallel to policy, not through it.

Second, release velocity of the tools themselves. The OECD puts the half-life of technical knowledge at around five years, in IT at two to three. With agentic tools in 2026, industry practice puts it at a few months. Some skills move from “in demand” to “obsolete” in under two months. A three-year productive track record requirement carries no sensible signal under those cycles. A senior who built production pipelines 18 months ago is running a different stack today.

Third, team inertia as an adoption brake. The Bitkom study 2025/2026 shows 35 percent of companies have active upskilling programs, meaning 65 percent don’t primarily rely on it. Compliance requirements, tool whitelists, lengthy procurement, peer alignment: all of this systematically slows adoption in enterprise environments. Personio went public in April 2026 saying the company set up its own change-management program just to clarify internal expectations around AI use. A notable voice from German tech mid-market that shows how much structural effort goes into clarifying internal practice alone.

The hiring filter therefore tests for a combination that is structurally improbable. It filters out exactly those candidates whose learning curve happened in parallel to the corporate, not inside it.

Adaptability isn’t a CV item

Senior engineering maturity in 2026 isn’t accumulated experience, it’s a speed. Three components count:

- Learning speed: When did the candidate last voluntarily abandon a working workflow because something better arrived?

- Builder mindset: Does the candidate have side projects, GitHub activity, their own skills repositories, half-finished experiments outside regular work?

- Frustration tolerance for pioneering: Can the candidate stand the fact that a new tool is worse than their proven workflow for the first ten sessions, and stick with it until it’s better?

None of these components reliably appears as a bullet point on a CV. They show up in the 4-phase interview format, in the setup demo. One number makes the difference measurable: the Freelancer-Kompass 2025 with 3,210 German-speaking freelancers shows that 61 percent of IT freelancers actively invested in further training this year, an average of 24 to 36 days per year. For permanent employees in corporates, the comparable number is lower because internal training runs on consensus. A freelancer who isn’t current doesn’t win the next project. That necessity doesn’t replace talent, but over years it produces a different learning habit you can’t retrofit in hiring.

Five phrases that narrow the filter unintentionally

From active CTO briefs in 2025/2026, the five phrasings that filter out exactly the right candidates:

| Filter phrase in the brief | What you’re actually filtering |

|---|---|

| ”Minimum three years of productive experience with AI agents in an enterprise context” | Profiles that don’t exist that way; productive practice is six months old |

| ”Track record with multi-agent architectures in a corporate environment” | Candidates with a good-looking CV whose tool maturity got throttled by internal restrictions |

| ”Deep experience with productive LLM engineering in a regulated environment” | Compliance specialists, not a filter for lead profiles |

| ”Senior with verifiable AI projects at million-user reach” | Profiles from large tech firms, many of them organizationally distant from hands-on coding |

| ”At least 5 years as tech lead in an AI-first company” | A double-digit number of profiles worldwide, none of them available on the German-speaking hiring market |

Hiring managers are trying to use seniority markers from 2019 to hit a field that didn’t exist in 2019. The honest senior practitioners who invest 1,000+ euros monthly in subscriptions, write their own skills, and build side projects fall through the CV sieve because their actual practice doesn’t show up in classic bullet points.

Four behavioral anchors that belong in the brief

In our mandates we replace track-record filters with four precise behavioral anchors.

Current tool practice over track-record length. Which subscription does the candidate run today? What’s their monthly token spend? Which tool did they replace in the last 90 days? When did they last switch a workflow? These questions deliver more substance in 30 seconds than three years of AI experience in a bullet list. We cover the calibration of these questions in detail in the token-spend context.

Track record alone says nothing about the quality of tool use. GitClear’s analysis of 153 million lines of code shows: the share of code that has to be rewritten within two weeks has risen from 33 to 40 percent since AI tools went mainstream. Copy-paste in codebases now appears more often than refactoring or reuse for the first time in the measurement. Tools alone don’t make anyone better. What counts is how they’re used: diff discipline, spec hygiene, plan mode, review routines. Track-record filters don’t test that. Behavioral anchors do.

Own skills and side projects over accumulated corporate years. Does the candidate have their own skill repositories? Do they write slash commands? Do they build side projects that show they build outside work hours? The Stack Overflow Developer Survey 2025 shows: 69 percent of all developers learned new tech this year, 67 percent of them for personal projects or workplace AI use cases. Half your hiring pool is actively learning. The right question is: what has the candidate built in the last 90 days that wasn’t an office assignment?

Adaptation speed over linear career. When did the candidate last voluntarily abandon a working workflow because something better arrived? What was the last method they were convinced of and discarded again within three months? On a CV, this question is invisible. In conversation, it’s answered in two minutes.

Hybrid experience over pure-play corporate or pure-play startup. This is the most important differentiation. Anyone who only worked in corporates often has compliance routine and frustration tolerance, but little experimental drive. Anyone who only worked in young startups knows iteration and tool switches, but rarely learned audit, security, and scaling discipline. The most valuable combination are CVs with both: about five years of corporate phase earlier, then several years in more agile environments, now self-employed or in a scale-up. Both in one person is possible but rare. More common is in a team.

Where to find these profiles

Classic IT job boards filter on CV phrases, on the signals that aren’t meaningful in 2026. Anyone who wants to hire on behavioral anchors searches in different places.

GitHub activity. The most honest public proof of builder mindset. What counts isn’t stars but frequency and substance: regular commits in the last 90 days, own skill or slash-command repositories, half-finished experiments, forks of agentic tools with their own modifications. Senior practitioners in 2026 typically have several small, maintained projects rather than one big one.

Technical newsletters and Substacks. Anyone who writes regularly about agentic workflows usually runs the workflow too. Pragmatic Engineer, Latent Space, AI Engineer Newsletter, Simon Willison’s blog. In the German-speaking space, the active writer layer is small, but the profiles are all the more visible.

Conference speakers and meetup lists. AI Engineer Europe, Heise developer conferences, local meetups in Berlin, Munich, Vienna, Zurich. Speakers who talked about agentic workflows in 2025 or 2026 usually run the matching stack. Workshop leaders at AI academies are a secondary source, with one calibration: anyone who trains professionally doesn’t necessarily build daily themselves.

Communities and Discord servers. Anthropic Builders Slack, Cursor Discord, Aider GitHub Discussions, the OpenAI forum’s Agents area. Active profiles there are often the senior practitioners you don’t find on the classic market. Higher effort than CV review, much better hit quality.

Specialized intermediaries. Classic recruiters operate with keyword matching on CVs. Anyone looking for agentic profiles needs a partner who has calibrated the behavioral anchors themselves and runs discovery calls accordingly. That’s the gap we work in at ElevateX.

What we check in all channels is the same: currency (last commit, last post, last conference attendance in the current or previous year), substance (own examples, not just reposts), and consistency across multiple platforms.

Why hybrid teams are the right answer

A recurring observation from CTO calls in 2026: internal teams are on average slower in adoption than the market, and there are good structural reasons for that. Permanent employment teaches and rewards consistency, maintainability, and risk avoidance because that serves most work. Tool whitelists and compliance reviews protect the company but stretch experimentation cycles. Peer alignment secures quality but costs pace on solo pushes. These aren’t weaknesses of the people. They’re properties of the environment they work in.

External senior practitioners have the inverse setup. They have to adopt regularly because their business model forces market relevance. The next project acquisition depends on it. They have less tool gating, more frequent context switches, more practice in switching itself. In return they often have less internal compliance routine and less experience with multi-year migrations.

The right answer to this asymmetry isn’t “replace permanent employees.” The right answer is hybrid: a deliberately mixed setup of compliance routine and external experimental drive, in which both profiles calibrate each other. An Upwork survey 2026 delivers the clearest data point in the hiring debate. Of the 77 percent of organizations working with blended teams of permanent employees and external specialists, 40 percent successfully ship AI initiatives to production. For organizations relying solely on internal permanent-employee structures, it’s 20 percent. Twice the success rate, not gut feel but a structural observation across business models.

You don’t need one person with the perfect hybrid. You need two whose profiles complement each other, plus the right instructions on how they work together. How exactly this hybrid model looks operationally, who takes which role, how the external senior leaves on plan, where the typical pitfalls are, we cover in The Pacemaker Model for Engineering Teams (coming soon).

On the objection: “But compliance”

When the brief tips from “three years of enterprise track record” to “current tool maturity plus adaptation muscle,” the same objection keeps coming up in CTO calls: “But we’re regulated. We can’t bring in shadow-IT people who use tools that aren’t approved.”

Three answers to that.

First, the practical point. Tool maturity and compliance awareness aren’t opposites. Senior practitioners who work agentically with seriousness know sandbox boundaries, read-only defaults, pre-tool hooks, worktrees, git hooks as a safety net. Secrets hygiene they’ve usually learned early and painfully. Anyone who has worked across multiple mandates in different compliance regimes often understands compliance logic more operationally than someone sitting only in one regime, simply because of the comparative experience.

Second, the structural point. The EU AI Act brings additional compliance obligations in 2026, depending on use case and risk class. Concrete legal assessment belongs to legal, not in a hiring article. What can be said generally: compliance load isn’t solved by non-adoption, only by structured adoption. Anyone not building productive AI practice in 2026 has neither the mature compliance practice nor the operational AI practice in 2027. Both have to grow in parallel.

Third, the open point. “But compliance” is sometimes the honest answer in a CTO call and sometimes the convenient one. Both are understandable. The honest answer describes concrete requirements that the external practitioner has to meet. The convenient answer stays general and pushes the topic into the compliance department without clarifying whether the concerns actually apply in the specific context. The difference shows up the moment you ask concretely: which tools are forbidden? Which data may not enter the context? Which process steps must be documented? Anyone who can answer those directly has compliance under control. Anyone evading hasn’t clarified it yet, and that’s a solvable task.

Operationally that means: anyone in a regulated context clarifies in the brief which compliance requirements actually apply. External senior practitioners clarify in a discovery call within ten minutes whether they can meet the compliance profile. Most can. The others sort themselves out.

When you’re looking for a senior who delivers from day one

The most resource-intensive option in 2026 hiring isn’t onboarding someone who still has to learn. Most good hires learn quickly in the first weeks. The most resource-intensive option is searching for months for a CV profile that couldn’t have emerged in the form requested.

We place senior freelancers with current tool maturity, their own skill repos, and a hybrid CV who work with internal teams without running them over. Drop me a line on LinkedIn about where you stand. Or send a concrete request to our team. No pitch, an honest assessment within 48 hours.

![Agentic Engineering Hiring Interview 2026 [+21-Question PDF]](/_astro/agentic-engineering-interview-featured-de.BlvVAp5j_Z9ib7o.webp)